Details

Description

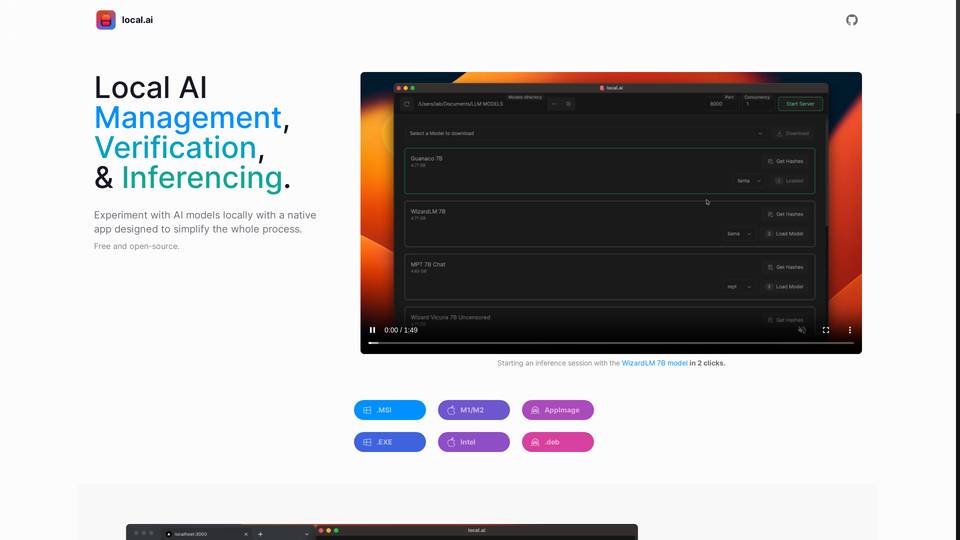

The Local AI Playground is a powerful and user-friendly tool that allows you to experiment with AI models locally without needing technical expertise or a GPU. It is a native app that simplifies the entire process of managing, verifying, and inferring AI models. The tool is free and open-source and supports various file formats for easy installation. With a compact and memory-efficient Rust backend, it offers features like CPU inferencing, adaptive threading, and GGML quantization. You can easily manage your AI models in one centralized location, sorting them by usage and organizing them in any directory. The tool also ensures the integrity of downloaded models with digest verification and provides information like license and usage chips. Additionally, you can start a local streaming server for AI inferencing with just two clicks, and upcoming features include GPU inferencing and more advanced server management. Overall, the Local AI Playground is a versatile and accessible tool that can power any AI app, both offline and online, with simplified AI model management, verification, and inferencing capabilities.

Link